Scenario

In computer science, there are several string-matching algorithms used to identify a position where one or several strings(patterns) are found within a larger string or text. In this post, 4 widely-used algorithms are applied to address the problem of single-pattern matching, including Brute Force (BF) algorithm, Rabin–Karp (RK) algorithm, Boyer–Moore (BM) algorithm and Knuth–Morris–Pratt (KMP) algorithm.

Here is the specification: given a pattern P and a searchable text T, both strings, determine whether the pattern appears somewhere in the text. Let’s say the length of P is n and the length of T is m, and also assume that n < m. And k = |Σ| be the size of the alphabet.

For ease of explanation, I define

T = 'penpineappleapplepen'

P = 'plep' where n = 4, m = 19 and k = 26.

Brute Force Algorithm

A.K.A Naive string-search algorithm, is the simplest algorithm to implement with the low efficiency. It’s just to try out every possible position that P might appear in T.

Rabin–Karp Algorithm

The RK algorithm uses a rolling hash to quickly filter out positions of the text that cannot match the pattern. And due to the condition that the positions of the text which have the same hash value as the pattern but may not actually match the pattern, if the hash value equals the hash value of the pattern, it performs a full comparison at that position to make sure completely matched.

As we can see, the hash function, which converts every string into a numeric value, play a key role in the process of applying the RK algorithm. There is a popular and effective rolling hash function called the Rabin fingerprint. But we do not discuss a specific hash function here because the selection of hash functions depends on the situation of the problem to be addressed. The general implementation of the RK algorithm is shown as follows.

1. computing the hash value for the substring s[i..i+m-1]of T and the pattern P;

The trick can be used with a hash roller. A rolling hash is a hash function designed specifically to allow the operation. This formulation of the rolling hash will determine the next hash value in constant time from the previous value.

s[i..i+m-1] = s[i-1..i+m-2] - s[i-1] + s[i+m-1]2. comparing the hash value h[i] with the hash value of P;

3. filtering out positions of the text that cannot match the pattern, and then checks for a match at the remaining positions.

Boyer–Moore Algorithm

The BM algorithm is efficient that is the standard benchmark for practical string-search literature. The key features of the algorithm are to match the pattern from right to left, and to skip the text in jumps of multiple characters instead of searching every single character in the text. The actual shifting offset is the maximum of the shifts calculated by two shift rules. Let’s take a look of them respectively first.

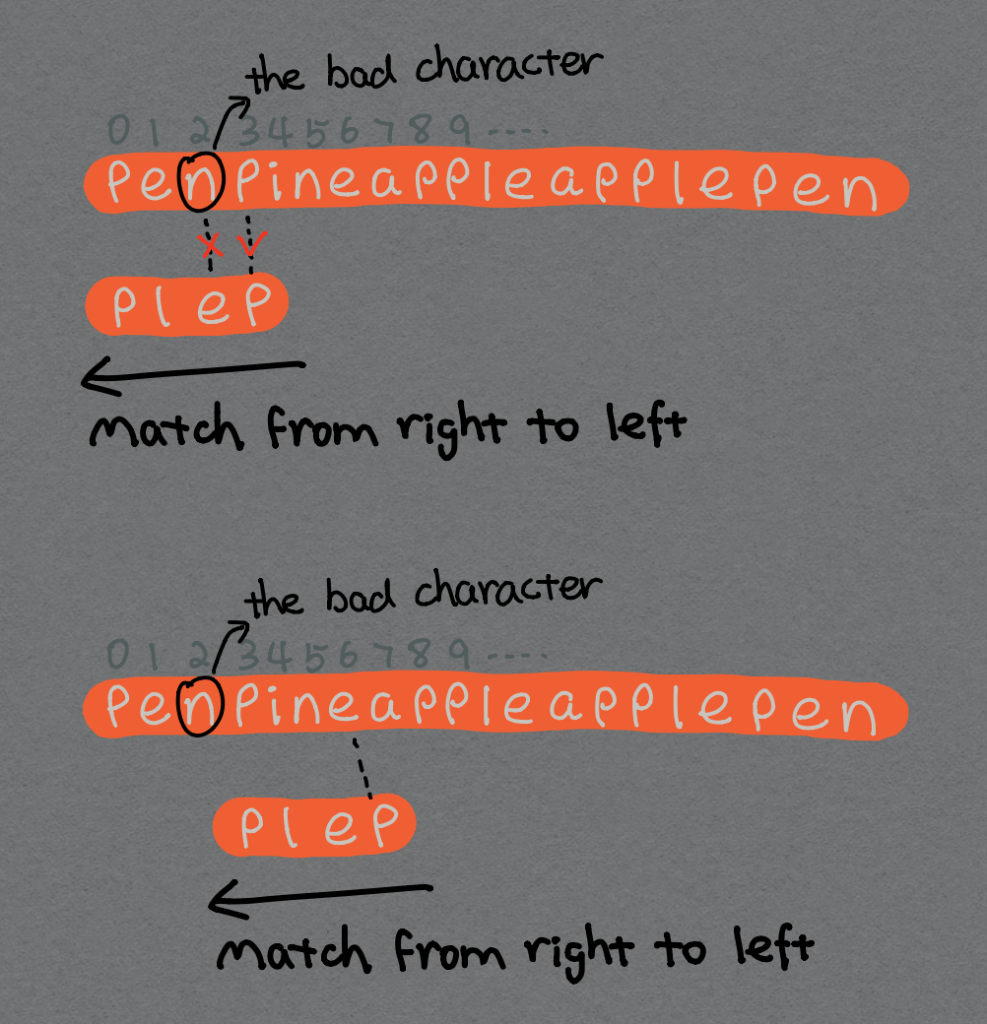

The Bad Character Rule

Assume c is the character of T at the position i, so T[i] = c. Upon mismatch as c:

- if

Pdoes not containc, shiftPentirely pasti(shift byi+1). e.g.T[2] = 'n', andPdoes not contain'n', then shiftPto right by3positions.

- otherwise, shift

Pto align the last occurrence (the most right) ofcinPwithT[i]. e.g.T[6] = 'e', andPcontains'e', then shiftPto align the most right of'e'inPwithT[6].

If we grab the bad character and match it in the pattern sequentially, this will be relatively inefficient and will affect the performance of this algorithm. Hence, it’s time to introduce the Boyer–Moore–Horspool algorithm or Horspool’s algorithm, which is an algorithm for finding substrings in strings. An array A[] with a length of 256 (i.e., bytes) can be produced to contain each symbol in the alphabet.

First, filling A[j] with length(P), where A[j] is not contained in P.

Then, filling A[j] with length(P) - j - 1, where A[j] is contained in P (if there are more than one character same as A[j], then j equals to the position of the last occurrence one).

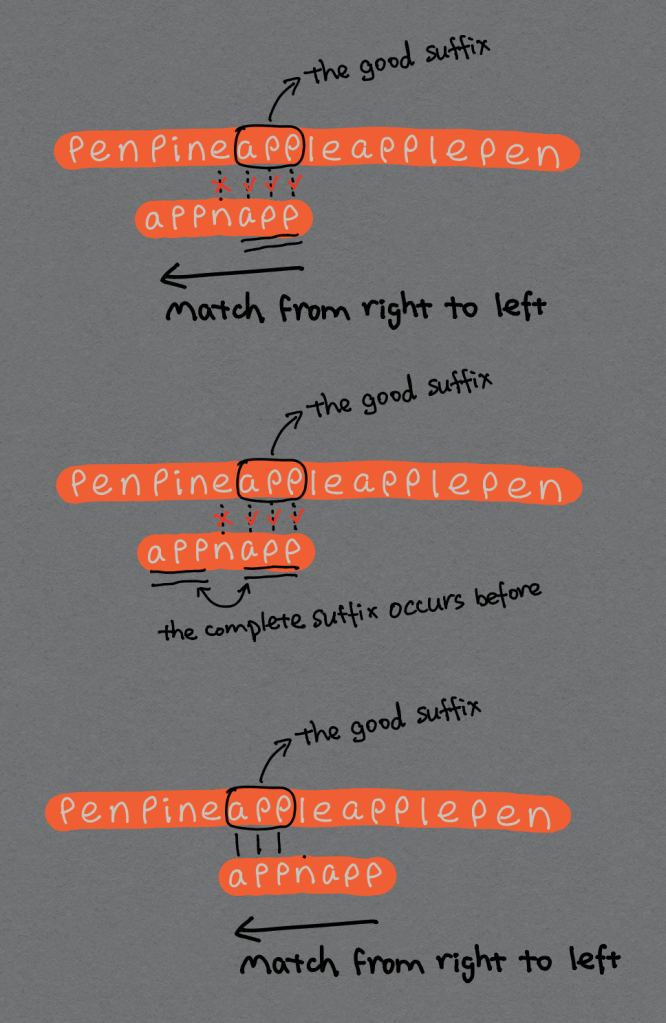

The Good Suffix Rule

Only applying the bad character rule cannot handle the situation such as

T = 'a'^n

P = 'b''a'^(m-1)Thus, a good suffix rule needs to be considered together. There is the key idea of the good suffix rule as follows.

Upon a mismatch, shift so that the already matched suffix of

Paligns with a previous occurrence of that suffix (or part of it) inP.

Crucial Ingredient:

COMP526 Unit 4-4 2020-03-03 String Matching: Boyer-Moore

the longest suffix ofP[j+1..m-1]that occurs earlier inP.

Two situations can be identified as:

- complete suffix occurs in

Pand the characters left of the suffix are not known to match. In order to interpret clearly,Pis redefined as'appnapp'. In this case,'e'inTdoes not match'n'inPso that'app'is the good suffix ofP. Then, we can find that the complete suffix'app'occurs once in the characters left of the suffix inP. Consequently, we shiftPto align them. If there are more than one positions matching the complete suffix, choose the most right one.

2. part of suffix occurs at the beginning of P. Well, P needs to be redefined again, let’s say P equals to 'ppnapp'. The certain suffix 'app' in P can be identified, and it does not occur anywhere else in P. However, a certain prefix 'pp' of P aligns the part of suffix 'app'. Hence, we shift P to align them. If there are more than one parts of the suffix matching the prefix, choose the most right one’s position to align.

An array B[] with the same length of P is employed to store shift if the match failed, which means for 0 <= j < m, B[j] stores shift if the match failed at P[j]. In this point, T[i+j+1..i+m-1] = P[j+1..m-1], while T[i] != P[j].

B[j] should be set as m-1-k, where k is the largest number so as to

P[j+1..m-1]is a suffix ofP[0..k]andP[j] != P[k-m+j+1]or

COMP526 Unit 4-4 2020-03-03 String Matching: Boyer-Moore

P[0..k]is a suffix ofP[j+1..m-1]

Knuth–Morris–Pratt Algorithm

On the way of the KMP algorithm, T and P are matched each character sequentially from left to right at the beginning, which is similar to the Brute Force algorithm. Not only that, but the KMP algorithm also uses the information from the pattern itself to avoid re-examine the characters that are anyway matched when a mismatch occurs.

Partial Match table (also known as “failure function”) can help determine the shift rules of P through pre-processing the pattern itself and compile a list of all possible fallback positions that bypass a maximum of hopeless characters while not sacrificing any potential matches in doing so. Here, array C[] is applied to store the same information is shown below. ‘Position’ indicates the position of the mismatched character in P, let’s say j, hence j‘s range is from 0 to m-1. ‘Prefix’ refers to the sub-pattern starting at P[0] and ending at P[j]. For each sub-pattern P[0..j], C[j] stores length of the maximum matching proper prefix which is also a suffix of the sub-pattern P[0..j].

C[j]= the longest proper prefix ofP[0..j]which is also a suffix ofP[0..j].

When a mismatch occurs,

- It’s known that

P[0..j-1]match withT[i-j…i-1](Note that j starts with0and increment it only when there is a match). - From the above definition, it’s also known that

C[j-1]is the count of characters ofP[0…j-1]that are both proper prefix and suffix. - According to the above two points, it’s not necessary to match the characters of

P[0…j-1]withT[i-j…i-1]again because these characters will anyway match. - Therefore, just shift

Pbyj - C[j-1], and start matching.

Efficiency

| Algorithms | Processing Time | Matching Time | Space |

| Brute Force | – | Θ(mn) | – |

| Rabin-Karp | Θ(m) | average Θ(n + m), worst Θ((n−m)m) | O(1) |

| Boyer-Moore | Θ(m + k) | best Ω(n/m), worst O(mn) | Θ(k) |

| Knuth–Morris–Pratt | Θ(m) | Θ(n) | Θ(m) |